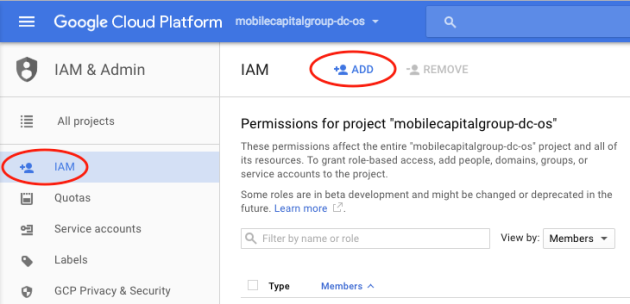

My previous post discussed setting up your Google Cloud Platform account to run DC/OS. Now we move on to installing DC/OS. Before starting, if you are new to DC/OS, take a moment to familiarize yourself with DC/OS concepts and DC/OS architecture. Also, have a look at The Mesosphere guide to getting started with DC/OS written about setting up DC/OS on AWS. Even though those instructions use shell scripts to generate the nodes, and the Google Cloud Platform project uses Ansible scripts, the concepts are the same.

Create the DC/OS Bootstrap Machine

The bootstrap machine is where DC/OS install artifacts are configured, built, and distributed. The DC/OS installation guide includes setup instructions for many cloud platforms. The instructions for Google Cloud Platform rely on Ansible scripts from a dcos-labs project on GitHub . At the time of this writing, the GCE set-up page in the DC/OS 1.8 Administrative guide was a little out of sync with the project README file, so I followed the README file instead.

Start from the Google Cloud Platform dashboard. Make sure the Google Cloud Platform project that you created for DC/OS is selected. Click the “hamburger” menu icon in the upper left corner of the page and then select Compute Engine from the menu. You need an “n1-standard-1” instance running Centos 7 on a 10 GB disk. Follow the README instructions carefully on how to configure this new VM instance.

Connecting to the DC/OS Bootstrap Instance

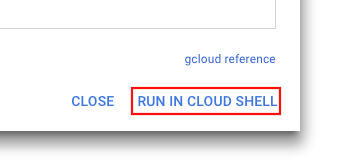

Once the DC/OS bootstrap instance starts, it will appear on the GCE VM Instance page with a green checkmark to the left of its name. To connect to the machine, either download the Cloud SDK to your desktop computer or activate Google Cloud Shell from the >_ icon near the right side of the page header.

![]()

Cloud SDK runs in an f1-micro Google Compute Engine and displays a command prompt at the bottom of the console page. Open the SSH drop-down list to the right of the bootstrap instance and pick View vcloud command. When the dialog opens pick RUN IN CLOUD SHELL (or use one of the other options to ssh into the bootstrap instance).

After connecting to the DC/OS Bootstrap instance, follow the instructions in the README file until you reach the configuration of the “hosts” file…

Ensure the IP address for master0 in ./hosts is the next consecutive IP from bootstrap_public_ip.

Defining the Master Node(s)

Unlike agent nodes that can be added as needed, once the master node cluster is installed, the number of master nodes cannot be changed without reinstalling the cluster. A cluster of master nodes needs a quorum to determine its leader, so you must install an odd number of nodes to avoid a “split brain” situation.

It is common to use three master nodes; however, if you are using the Google Cloud Platform free trial, it only allows eight CPUs. I tested with a single 2-CPU master node, two 2-CPU private agent nodes, and one 2-CPU public agent node.

The “hosts” file defines the number of master nodes in the DC/OS cluster. The IP addresses of the master nodes should be contiguous addresses from the IP address of the bootstrap machine. Here is an example of a three-node cluster.

[masters]master0 ip=10.128.0.3master1 ip=10.128.0.4master2 ip=10.128.0.5[agents]agent[0000:9999][bootstrap]bootstrap |

Defining the Agent Nodes

The README file says:

Please make appropriate changes to dcos_gce/group_vars/all. You need to review project, subnet, login_name, bootstrap_public_ip & zone

The variables are explained at the bottom of the README file. At a minimum, you must set the following variables in the “./group_vars/all” file.

- Project.

- Login name.

- Bootstrap public IP.

- Zone.

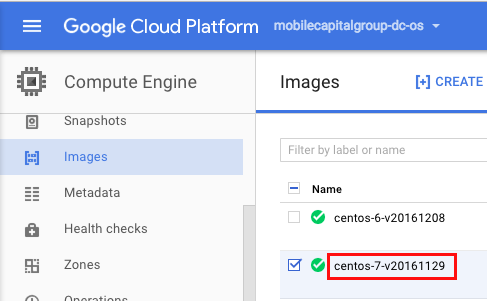

You may also want to tweak the template settings of the master and agent nodes, but it is not required. I changed the “gcloudimage” property to match the Centos 7 image that I found in the Compute Engine –> Image listing.

Install the DC/OS Cluster

You are now ready to install the DC/OS cluster using the Ansible script.

ansible-playbook -i hosts install.yml

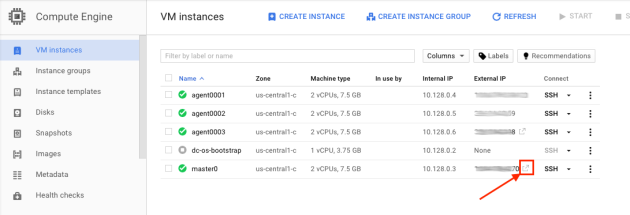

You’ll see some error messages as it attempts to shut down instances that don’t yet exist, and then it will begin creating and configuring the new instance(s). When the script completes, the new instance(s) will appear in the Compute Engine VM Instance list.

Install the Private Agent Nodes

As previously mentioned, I created two private nodes with the following command.

ansible-playbook -i hosts add_agents.yml --extra-vars \

"start_id=0001 end_id=0002 agent_type=private"

Install the Public Agent Nodes

If you are using the free trial, things get a little tricky here. Your public agent node creation will fail because it puts you over the CPU quota. To get around the quota, I stopped agent0002 first, and then I created the public node. Once the public node was created, I shut down the bootstrap machine to free-up one CPU, and then I restarted agent0002. Here is the command to create the public node.

ansible-playbook -i hosts add_agents.yml --extra-vars \

"start_id=0003 end_id=0003 agent_type=public"

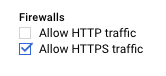

Once the public node is running, edit the instance configuration from the GCE VM Instance page and check the https option (temporarily do the same for the master0 instance).

Open the DC/OS Console

Now we are ready to launch the DC/OS console. Eventually, we will do this with a virtual host pointed at the Marathon load balancer running on the public agent node, but for now, we will open the DC/OS dashboard directly from the master node.

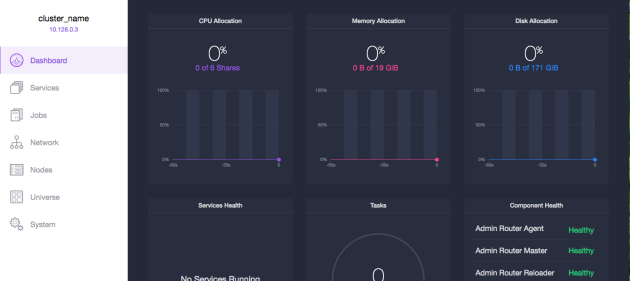

After signing in with the Google account that you used for Google Compute Cloud, you will see something like this.

Installing the Marathon Load Balancer

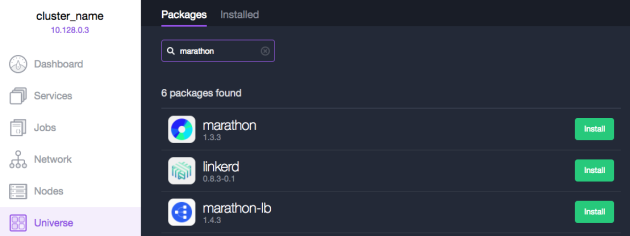

To try it out, let’s install the Marathon Load Balancer on the public agent node. Select Services from the left side menu, click Deploy Service and then click the link that says install from Universe. When the page opens, search for “marathon.” The search results should look something like this.

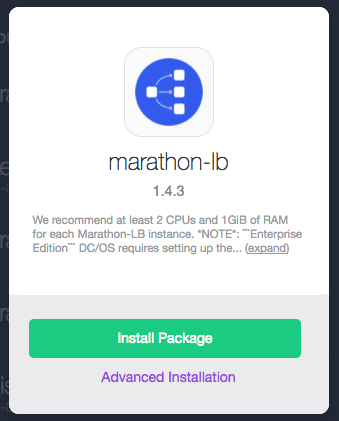

Click Install on the marathon-lb row. When the dialog opens, click Install Package.

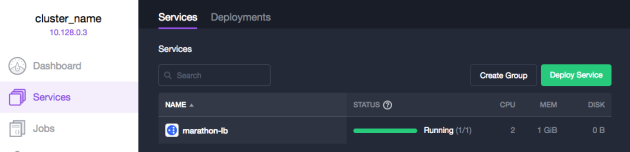

Return to the Services page, and in a moment, you should see this.

You are now ready to being using DC/OS with your own artifacts, which I will discuss more in my next post.

Not on my Dime

Not on my Dime

Part of what is so attractive about joining a startup is that there is no technology debt. Our microservices architecture is built using

Part of what is so attractive about joining a startup is that there is no technology debt. Our microservices architecture is built using